Algorithms

Question 1 |

A |  |

B |  |

C |  |

D |  |

In this question they given three main constraints

- The input array is in sorted order

- Use recursive binary search

- Worst case number of operations

If the array is in sorted order then the worst case time complexity is O(logn)

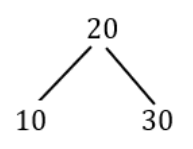

Ex: 10, 20, 30

The binary search approach is using either recursive or iterative method is

Step-1: element = middle, the element is found and return the index.

Step-2: element > middle, search for the element in the sub-array starting from middle+1 index to n.

Step-3: element < middle, search for element in the sub-array starting from 0 index to middle -1.

Note: The worst case happens when we want to find the smallest/largest farthest node. So, it will not take more than O(logn) time.

Question 2 |

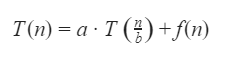

Which one of the following options is correct about the recurrence T(n)?

A |  |

B |  |

C |  |

D |  |

Question 3 |

S1: There exists a minimum weighted edge in G which is present in every minimum spanning tree of G.

S2:If every edge in G has distinct weight, then G has a unique minimum spanning tree.

Which of the following options is correct?

A | S1is false and S2is true.

|

B | S1is true and S2is false. |

C | Both S1 and S2are false. |

D | Both S1 and S2are true. |

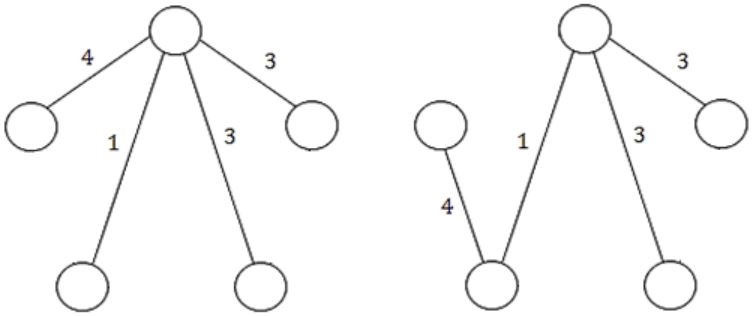

Statement-1: FALSE: The given statement is not valid for all the cases because they are not mentioned, edge weights are in distinct or duplicate. So, we can take any random edge weights for the given statement.

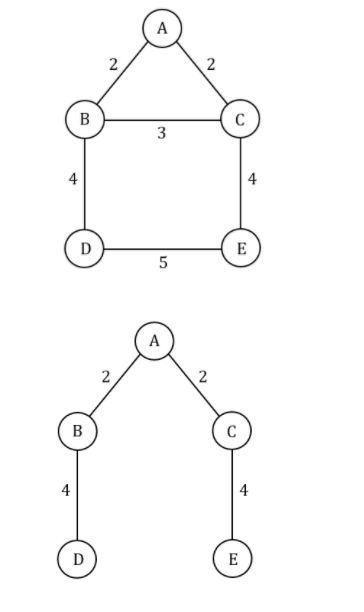

Example:

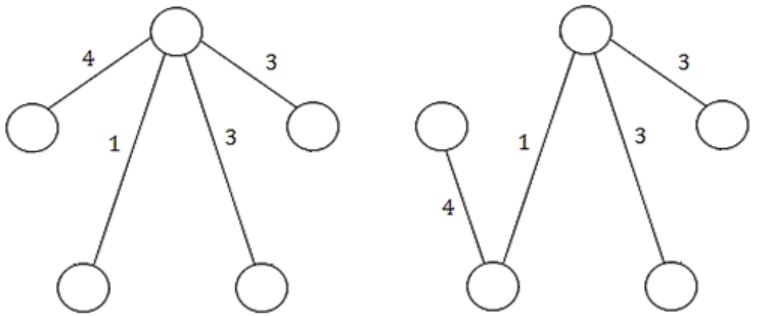

Statement-2: TRUE: Using the kruskal’s (or) prim's algorithm we get a unique MST when there is a unique edge weight.

Example:

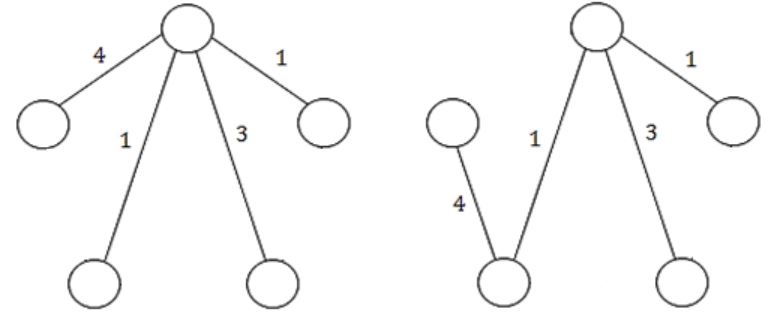

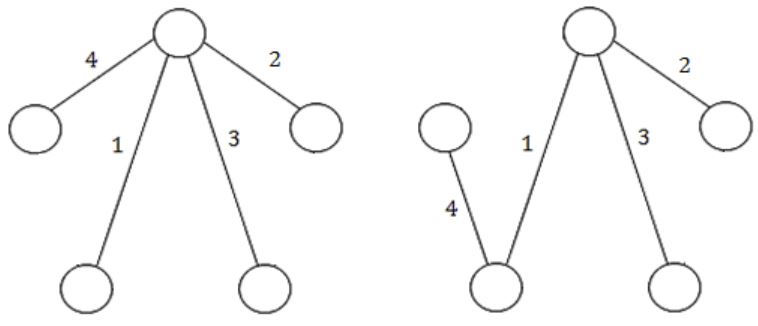

Based on the above graph, we get the MST is

Question 4 |

The sum of the quality-scores of all the vertices in the graph shown above is _________.

A | 929 |

Question 5 |

A |  |

B |  |

C |  |

D |  |

The heap is nothing but a complete binary tree and uses linear search to find the elements.

In min heap, the parent key is less than or equal to (≤) the child keys. The maximum values should present in the farthest leaf node for the worst case time complexity.

To traverse all the elements in a heap will take O(n) time for the worst case because it uses the linear search to find the element.

Question 6 |

Merge sort uses

A | Divide and conquer strategy |

B | Backtracking approach |

C | Heuristic search |

D | Greedy approach |

Question 7 |

The postfix expression for the infix expression

A + B*(C + D)/F + D*E is:

A | AB + CD + *F/D + E* |

B | ABCD + *F/DE*++ |

C | A *B + CD/F *DE++ |

D | A + *BCD/F* DE++ |

E | None of the above |

A B C D + * F / + D E * +

Question 8 |

Which of the following statements is true?

- I. As the number of entries in a hash table increases, the number of collisions increases.

II. Recursive programs are efficient

III. The worst case complexity for Quicksort is O(n2)

A | I and II |

B | II and III |

C | I and IV |

D | I and III |

Recursive program requires stack for storing the function state. Any recursive program can be rewritten as an iterative one. Advantage of recursion is "less programming effort", while it always lags behind iterative one in terms of performance.

Question 9 |

Consider the following sequence of numbers

92, 37, 52, 12, 11, 25

Use bubble sort to arrange the sequence in ascending order. Give the sequence at the end of each of the first five passes.

A | Theory Explanation. |

Question 10 |

How many minimum spanning trees does the following graph have? Draw them. (Weights are assigned to the edge).

A | Theory Explanation. |

Question 11 |

For parameters a and b, both of which are ω(1), T(n) = T(n1/a)+1, and T(b)=1.

Then T(n) is

A | θ(loga logb n) |

B | θ(logb loga n)

|

C | θ(log2 log2 n)

|

D | θ(logab n)

|

T(n) = [T(n1/a2)+1] + 1

= [T(n1/a3)+1] + 2

= [T(n1/a3)] + 3

= [T(n1/ak)] + b

= logb n = ak

= log logb n = k log a

= k= loga logb n

T(n)=1+loga logb n

T(n)=O(loga logb n)

Question 12 |

Let G = (V,E) be a weighted undirected graph and let T be a Minimum Spanning Tree (MST) of G maintained using adjacency lists. Suppose a new weighted edge (u,v) ∈ V×V is added to G. The worst case time complexity of determining if T is still an MST of the resultant graph is

A | θ(|E|+|V|) |

B | θ(|E| log|V|) |

C | θ(|E||V|) |

D | θ(|V|) |

• As T is a minimum spanning tree and we need to add a new edge to existing spanning tree.

• Later we need to check still T is a minimum spanning tree or not, So we need to check all vertices whether there is any cycle present after adding a new edge.

• All vertices need to traverse to confirm minimum spanning tree after adding new edge then time complexity is O(V).

Method-2:

Time Complexity:

Total vertices: V, Total Edges : E

• O(logV) – to extract each vertex from the queue. So for V vertices – O(VlogV)

• O(logV) – each time a new pair object with a new key value of a vertex and will be done at most once for each edge. So for total E edge – O(ElogV)

• So overall complexity: O(VlogV) + O(ElogV) = O((E+V)logV) = O(ElogV)

Note: Method-1 is the most appropriate answer for giving a question.

Question 13 |

Consider a graph G = (V, E), where V = {v1, v2, …, v100}, E = {(vi, vj) | 1 ≤ i < j ≤ 100}, and weight of the edge (vi, vj) is |i - j|. The weight of the minimum spanning tree of G is ______.

A | 99 |

• N =100

• Edge weight is |i-j| for Edge (vi,vj) {1<=i<=100}

• The weight of edge(v1,v2) is 1 , edge(v5,v6) is 1.

• So, 99 edges of weight is 99.

Question 14 |

Which of the following is false?

A |  |

B |  |

C |  |

D |  |

Question 15 |

The recurrence relation

T(1) = 2 T(n) = 3T(n/4)+n

has the solution, T(n) equals to

A | O(n) |

B | O(log n) |

C | O(n3/4) |

D | None of the above |

Question 16 |

The average number of key comparisons done on a successful sequential search in list of length n is

A | log n |

B | n-1/2 |

C | n/2 |

D | n+1/2 |

= No. of comparisons if element present in 1st position + No. of comparisons if element present in 2nd position + ............. + No. of comparisons if element present in nth position

= 1 + 2 + 3 + ... + n

= n(n+1)/2

Since there are n elements in the list, so average no. of comparisons

= Total comparisons/Total no. of elements

= (n(n+1)/2)/n

= n+1/2

Question 17 |

Quicksort is run on two inputs shown below to sort in ascending order taking first element as pivot,

(i) 1,2,3,...,n (ii) n,n-1,n-2,...,2,1

Let C1 and C2 be the number of comparisons made for the inputs (i) and (ii) respectively. Then,

A | C1 < C2 |

B | C1 > C2 |

C | C1 = C2 |

D | we cannot say anything for arbitrary n. |

So, option is (C) is correct.

Question 18 |

A two dimensional array A[1...n][1...n] of integers is partially sorted if

∀i, j ∈ [1...n−1], A[i][j] < A[i][j+1] and

A[i][j] < A[i+1][j]

Fill in the blanks:

(a) The smallest item in the array is at A[i][j] where i=............and j=..............

(b) The smallest item is deleted. Complete the following O(n) procedure to insert item x (which is guaranteed to be smaller than any item in the last row or column) still keeping A partially sorted.

procedure insert (x: integer);

var i,j: integer;

begin

(1) i:=1; j:=1, A[i][j]:=x;

(2) while (x > ...... or x > ......) do

(3) if A[i+1][j] < A[i][j] ......... then begin

(4) A[i][j]:=A[i+1][j]; i:=i+1;

(5) end

(6) else begin

(7) ............

(8) end

(9) A[i][j]:= .............

end

A | Theory Explanation. |

Question 19 |

Insert the characters of the string K R P C S N Y T J M into a hash table of size 10.

Use the hash function

h(x) = (ord(x) – ord("a") + 1) mod10

and linear probing to resolve collisions.

(a) Which insertions cause collisions?

(b) Display the final hash table.

A | Theory Explanation. |

Question 20 |

A complete, undirected, weighted graph G is given on the vertex {0, 1,...., n−1} for any fixed ‘n’. Draw the minimum spanning tree of G if

(a) the weight of the edge (u,v) is ∣u − v∣

(b) the weight of the edge (u,v) is u + v

A | Theory Explanation. |

Question 21 |

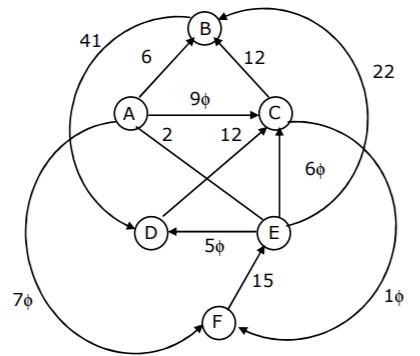

Let G be the directed, weighted graph shown in below figure.

We are interested in the shortest paths from A.

(a) Output the sequence of vertices identified by the Dijkstra’s algorithm for single source shortest path when the algorithm is started at node A.

(b) Write down sequence of vertices in the shortest path from A to E.

(c) What is the cost of the shortest path from A to E?

A | Theory Explanation. |

Question 22 |

Consider the following program that attempts to locate an element x in a sorted array a[] using binary search. Assume N>1. The program is erroneous. Under what conditions does the program fail?

var i,j,k: integer; x: integer;

a:= array; [1...N] of integer;

begin i:= 1; j:= N;

repeat k:(i+j) div 2;

if a[k] < x then i:= k

else j:= k

until (a[k] = x) or (i >= j);

if (a[k] = x) then

writeln ('x is in the array')

else

writeln ('x is not in the array')

end; A | Theory Explanation. |

Question 23 |

The correct matching for the following pairs is

(A) All pairs shortest path (1) Greedy (B) Quick Sort (2) Depth-First search (C) Minimum weight spanning tree (3) Dynamic Programming (D) Connected Components (4) Divide and and Conquer

A | A – 2 B – 4 C – 1 D – 3 |

B | A – 3 B – 4 C – 1 D – 2 |

C | A – 3 B – 4 C – 2 D – 1 |

D | A – 4 B – 1 C – 2 D – 3 |

Quick sort - Divide and Conquer

Minimum weight Spanning tree - Greedy

Connected components - Depth-First search

Question 24 |

Let T(n) be the function defined by T(1)= 1, T(n)= 2T(⌊n/2⌋) + √n for n≥2. Which of the following statement(s) is true?

A | T(n) = O(√n) |

B | T(n) = O(n) |

C | T(n) = O(log n) |

D | None of the above |

Question 25 |

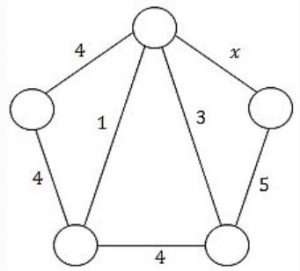

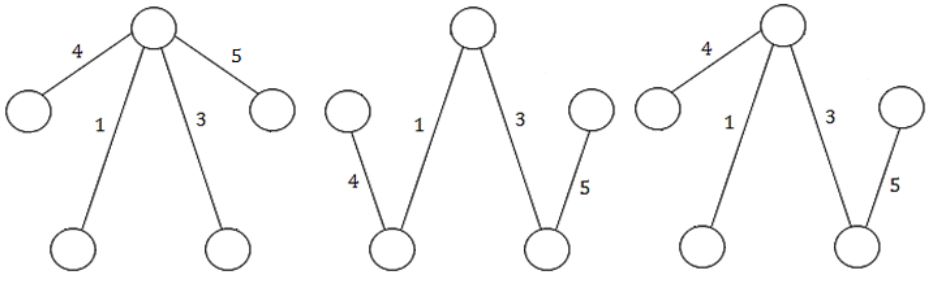

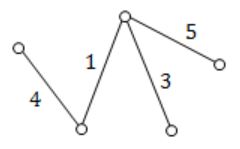

Consider the following undirected graph G:

Choose a value for x that will maximize the number of minimum weight spanning trees (MWSTs) of G. The number of MWSTs of G for this value of x is _________.

A | 4 |

B | 5 |

C | 6 |

D | 7 |

If x = 5 then the total number of MWSTs are 4.

If r = 1

If r = 2

If r = 3

If r = 4

If r = 5

Question 26 |

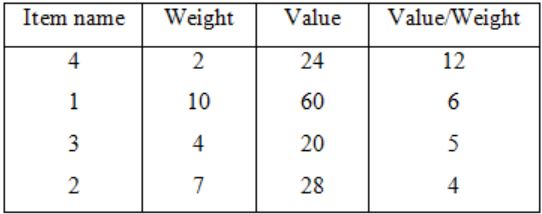

Consider the weights and values of items listed below. Note that there is only one unit of each item.

The task is to pick a subset of these items such that their total weight is no more than 11 Kgs and their total value is maximized. Moreover, no item may be split. The total value of items picked by an optimal algorithm is denoted by Vopt. A greedy algorithm sorts the items by their value-to-weight ratios in descending order and packs them greedily, starting from the first item in the ordered list. The total value of items picked by the greedy algorithm is denoted by Vgreedy.

The value of Vopt − Vgreedy is ______ .

A | 16 |

B | 17 |

C | 18 |

D | 19 |

Vopt is clearly = 60

For Vgreedy use the table (Do not take the fraction as per the question),

Item 4 picked,

Profit = 24

Remaining weight = 11 – 2 = 9

Next item 3 picked (item 1 cannot be picked since its capacity is greater than available capacity),

Profit = 24 + 20 = 44

Remaining capacity = 9 – 4 = 5

Now no item can be picked with available capacity.

So Vgreedy = 44

∴ Vopt – Vgreedy = 60 – 44 = 16

Question 27 |

Which one of the following algorithm design techniques is used in finding all pairs of shortest distances in a graph?

A | Dynamic programming |

B | Backtracking |

C | Greedy |

D | Divide and Conquer |

Question 28 |

Give the correct matching for the following pairs:

A. O(log n) 1. Selection sort

B. O(n) 2. Insertion sort

C. O(nlog n) 3. Binary search

D. O(n2) 4. Merge sort A | A – R B – P C – Q D – S |

B | A – R B – P C – S D – Q |

C | A – P B – R C – S D – Q |

D | A – P B – S C – R D – Q |

Selection = O(n)

Merge sort = O(n log n)

Insertion sort = O(n2)

Question 29 |

(a) Solve the following recurrence relation:

xn = 2xn-1 - 1, n>1 x1 = 2

(b) Consider the grammar

S → Aa | b A → Ac | Sd | ε

Construct an equivalent grammar with no left recursion and with minimum number of production rules.

A | Theory Explanation. |

Question 30 |

A sorting technique is called stable if

A | it takes O (nlog n) time |

B | it maintains the relative order of occurrence of non-distinct elements |

C | it uses divide and conquer paradigm |

D | it takes O(n) space |

Question 31 |

Suppose we want to arrange the n numbers stored in any array such that all negative values occur before all positive ones. Minimum number of exchanges required in the worst case is

A | n - 1 |

B | n |

C | n + 1 |

D | None of the above |

Now we will swap 1st no. with nth no. and then 2nd no. with (n-1)th no. and then 3rd no. with (n-2)th and so on. Like this we will have to do n/2 swaps in worst case.

Question 32 |

If one uses straight two-way merge sort algorithm to sort the following elements in ascending order:

20, 47, 15, 8, 9, 4, 40, 30, 12, 17

then the order of these elements after second pass of the algorithm is:

A | 8, 9, 15, 20, 47, 4, 12, 17, 30, 40 |

B | 8, 15, 20, 47, 4, 9, 30, 40, 12, 17 |

C | 15, 20, 47, 4, 8, 9, 12, 30, 40, 17 |

D | 4, 8, 9, 15, 20, 47, 12, 17, 30, 40 |

Question 33 |

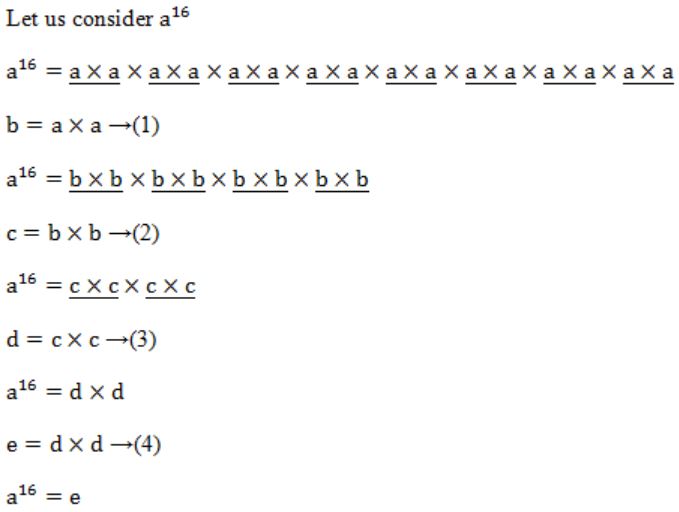

If n is a power of 2, then the minimum number of multiplications needed to compute a* is

A | log2 n |

B | √n |

C | n-1 |

D | n |

We require 4 multiplications to calculate a16 .....(I)

→ Like that 3 multiplications requires to calculate a8 .....(II)

I, II are satisfied with the option A.

Question 34 |

The minimum number of record movements required to merge five files A (with 10 records), B (with 20 records), C (with 15 records), D (with 5 records) and E (with 25 records) is:

A | 165 |

B | 90 |

C | 75 |

D | 65 |

10, 20, 15, 5, 25

Merge 5 & 10:

5+10 = 15 movements

Now the list is

15, 20, 15, 25

Merge 15 & 15:

15+15 = 30 movements

Now the list is

30, 20, 25

Merge 20 & 25:

20+25 = 45 movements

Now the list is

30, 45

Merge 30 & 45:

30+45 = 75 movements

∴ Total no. of movements

= 15+30+45+75

= 165

Question 35 |

If T1 = O(1), give the correct matching for the following pairs:

(M) Tn = Tn−1 + n (U) Tn = O(n) (N) Tn = Tn/2 + n (V) Tn = O(nlogn) (O) Tn = Tn/2 + nlogn (W) T = O(n2) (P) Tn = Tn−1 + logn (X) Tn = O(log2n)

A | M – W N – V O – U P - X |

B | M – W N – U O – X P - V |

C | M – V N – W O – X P - U |

D | None of the above |

(N) Apply Master's theorem

T(n) = θ(n) = O(n)

(O) Apply Master's theorem

T(n) = θ(n logn) = O(n logn)

(P) Here we are adding the log of firstn natural numbers.

So,

Tn = log1 + log2 + log3 + ... + logn

= log (1×2×...n)

= log(n!)

= θ(n logn)

Question 36 |

Let A be an n×n matrix such that the elements in each row and each column are arranged in ascending order. Draw a decision tree which finds 1st, 2nd and 3rd smallest elements in minimum number of comparisons.

A | Theory Explanation. |

Question 37 |

(a) Consider the following algorithm. Assume procedure A and procedure B take O(1) and O(1/n) unit of time respectively. Derive the time complexity of the algorithm in O-notation.

algorithm what (n)

begin

if n = 1 then call A

else begin

what (n-1);

call B(n)

end

end.

(b) Write a constant time algorithm to insert a node with data D just before the node with address p of a singly linked list.

A | Theory Explanation. |

Question 38 |

Let s be a sorted array of n integers. Let t(n) denote the time taken for the most efficient algorithm to determined if there are two elements with sum less than 1000 in s. Which of the following statements is true?

A | t(n) is O(1) |

B | n ≤ t(n) ≤ n log2 n |

C | n log2 n ≤ t(n) < (n/2) |

D | t(n) = (n/2) |

Question 39 |

Consider the following functions

Which of the following is true?

A | h(n) is O (f(n)) |

B | h(n) is O (g(n)) |

C | g(n) is not O (f(n)) |

D | f(n) is O(g(n)) |

Then

f(n) = 3(n32) = 3*(210)32 = 3*2320

g(n) = 2320

h(n) = 1024!

So relation between the functions can be:

f(n) and g(n) are of same order, so f(n) is O(g(n)) and g(n) = O(f(n)). Option C is wrong.

h(n) is n! Which is of higher order than f(n) and g(n). So options A and B are wrong.

Question 40 |

Let G be an undirected connected graph with distinct edge weight. Let emax be the edge with maximum weight and emin the edge with minimum weight. Which of the following statements is false?

A | Every minimum spanning tree of G must contain emin |

B | If emax is in a minimum spanning tree, then its removal must disconnect G |

C | No minimum spanning tree contains emax |

D | G has a unique minimum spanning tree |

Minimum Spanning Tree:

Question 41 |

A recursive program to compute Fibonacci numbers is shown below. Assume you are also given an array f[0…..m] with all elements initialized to 0.

fib(n) {

if (n > M) error ();

if (n == 0) return 1;

if (n == 1) return 1;

if (▭) _________________(1)

return ▭ ____________(2)

t = fib(n – 1) + fib (n – 2);

▭__________(3)

return t;

}

(a) Fill in the boxes with expressions/statements to make fib() store and reuse computed Fibonacci values. Write the box number and the corresponding contents in your answer book.

(b) What is the time complexity of the resulting program when computing fib(n)?

A | Theory Explanation is given below. |

(2) f[n-2];

(3) f[n-2] = +;

(b) The time complexity of the resulting program when computing fib(n) is Θ(n).

Question 42 |

An array contains four occurrences of 0, five occurrences of 1, and three occurrences of 2 in any order. The array is to be sorted using swap operations (elements that are swapped need to be adjacent).

(a) What is the minimum number of swaps needed to sort such an array in the worst case?

(b) Give an ordering of elements in the above array so that the minimum number of swaps needed to sort the array is maximum.

A | Theory Explanation is given below. |

In Bubble sort maximum no. of swap is done when the elements are in non-increasing order, i.e.,

{2, 2, 2, 1, 1, 1, 1, 1, 0, 0, 0, 0}

Pass 1 - 9 swaps

Pass 2 - 9 swaps

Pass 3 - 9 swaps

Pass 4 - 4 swaps

Pass 5 - 4 swaps

Pass 6 - 4 swaps

Pass 7 - 4 swaps

Pass 8 - 4 swaps

Pass 9 - 0 swaps

Pass 10 - 0 swaps

Pass 11 - 0 swaps

Total swaps = 47

(b) Same as part (a)

(a)

While traversing the tree we will get value,

E.val = 12

(b) While traversing the parse tree we will get 10 Reductions.

Question 43 |

Randomized quicksort is an extension of quicksort where the pivot is chosen randomly. What is the worst case complexity of sorting n numbers using randomized quicksort?

A | O(n) |

B | O(n log n) |

C | O(n2) |

D | O(n!) |

Question 44 |

Let f(n) = n2logn and g(n) = n(logn)10 be two positive functions of n. Which of the following statements is correct?

A | f(n) = O(g(n) and g(n) ≠ O(f(n)) |

B | g(n) = O(f(n) and f(n) ≠ O(g(n)) |

C | f(n) ≠ O(g(n)) and g(n) ≠ O(f(n)) |

D | f(n) = O(g(n)) and g(n) = O(f(n)) |

Cancel nlogn from f(n), g(n)

f(n) = n; g(n) = (logn)9

n is too large than (logn)9

So f(n)! = O(g(n)) and g(n) = O(f(n))

Question 45 |

Consider a weighted undirected graph with vertex set V = {n1,n2,n3,n4,n5,n6} and edge set

E = {(n1,n2,2),(n1,n3,8),(n1,n6,3),(n2,n4,4),(n2,n5,12),(n3,n4,7),(n4,n5,9),

(n4,n6,4)}. The third value in each tuple represents the weight of the edge

specified in the tuple.

(a) List the edges of a minimum spanning tree of the graph.

(b) How many distinct minimum spanning trees does this graph have?

(c) Is the minimum among the edge weights of a minimum spanning tree unique overall possible minimum spanning trees of a graph?

(d) Is the maximum among the edge weights of a minimum spanning tree unique over all possible minimum spanning trees of a graph?

A | Theory Explanation is given below. |

(c) Yes, it always. Because 'the edge weight 2 is unique'.

(d) Yes, it always. Because 'the edge weight 9 is unique'.

Question 46 |

The solution to the recurrence equation T(2k) = 3 T(2k-1) + 1, T(1)=1, is:

A |  |

B |  |

C |  |

D |  |

T(1)=1

k=0; T(1) = 3T(2-1)+1

k=1; T(2) = 3T(20)+1 = 3(1)+1 = 4

k=2; T(4) = 3T(21)+1 = 3(4)+1 = 13

k=3; T(8) = 3T(22)+1 = 3(13)+1 = 40

k=4; T(16) = 3T(23)+1 = 3(40)+1 = 121

Simply go through the options.

Option B:

k=4 ⇒ (34+1-1)/2

⇒ (243-1)/2

⇒ 121

Question 47 |

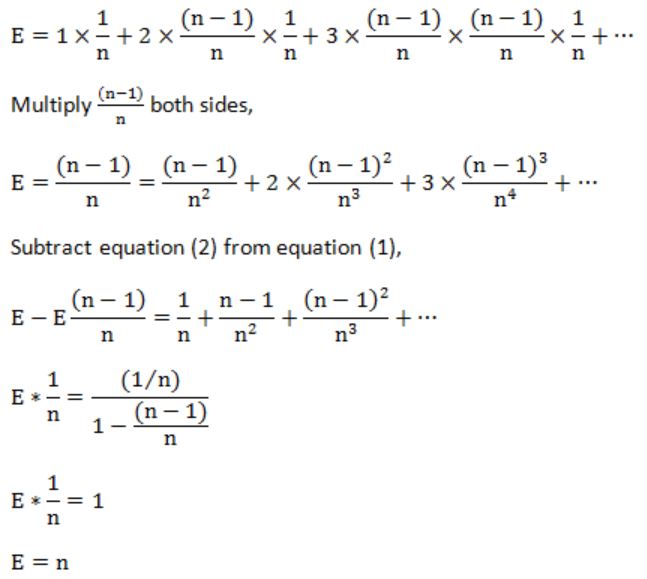

Consider the following algorithm for searching for a given number x in an unsorted array A[1...n] having n distinct values:

1. Choose an i uniformly at random from 1...n;

2. If A[i]=x then Stop else Goto 1;

Assuming that x is present in A, what is the expected number of comparisons made by the algorithm before it terminates?

A | n |

B | n-1 |

C | 2n |

D | n/2 |

Question 48 |

The running time of the following algorithm

Procedure A(n) If n <= 2 return(1) else return (A(⌈√n⌉));

is best described by:

A | O(n) |

B | O(log n) |

C | O(log log n) |

D | O(1) |

Let n=2m

So, T(2m) = T(2m/2)+1

We substitute T(2m) = S(m),

So now the equation becomes,

S(m) = S(m/2)+1

Now after applying master's theorem,

S(m) = O(log m)

T(n) = O(log log n)

Question 49 |

Fill in the blanks in the following template of an algorithm to compute all pairs shortest path lengths in a directed graph G with n*n adjacency matrix A. A[i,j]equals if there is an edge in G from i to j, and 0 otherwise. Your aim in filling in the blanks is to ensure that the algorithm is correct.

INITIALIZATION: For i = 1 … n

{For j = 1 … n

{ if A[i,j]=0 then P[i,j] = _______ else P[i,j] =____;}

ALGORITHM: For i = 1 …n

{ For j = 1 …n

{For k = 1 …n

{P[__,___]=min{_______,_______};}

}

}

- (a) Copy the complete line containing the blanks in the Initialization step and fill in the blanks.

(b) Copy the complete line containing the blanks in the Algorithm step and fill in the blanks.

(c) Fill in the blank: The running time of the Algorithm is O(____).

A | Theory Explanation is given below. |

{ for j = 1 ... n

{ if a[i,j] = 0 then P[i,j] = infinite;

else P[i,j] = a[i,j];

}

}

(b) ALGORITHM: For i = 1 ... n

{ for j = 1 ... n;

{ for k = 1 ... n

{

P[i,j] = min (P[i,j], P[i,k]+P[k,j])

}

}

}

(c) Actual graph:

MST: There are 2 possible MST's

Question 50 |

Consider the following C function.

float f(float x, int y)

{

float p, s; int i;

for (s=1, p=1, i=1; i < y; i ++)

{

p*= x/i;

s+=p;

}

return s;

}

For large values of y, the return value of the function f best approximates

A | Xy |

B | ex |

C | ln(1+x) |

D | Xx |

S = S+P

Iteration 1:

P=1; i=1; S=1

P=x

S = 1+x

Iteration 2:

P=x; S = 1+x; i=2

P = x * x/2 = x2/2

Iteration 3:

P = x2/2; S = 1+x+x2/2; i=3

P = (x2/2)(x/3) = x3/6

S = 1 + x + x2/2 + x3/6

Continue upto n

Then f( ) returns:

S = 1 + x/1 + x2/2⋅1 + x3/3⋅2 + ...

= 1 + x/1! + x2/2! + x3/3! + ... + xn/n!

= ex